Hello everyone, in this post, I will introduce LangChain 0.1.0. Over the past year, LangChain has seen remarkable growth. With version 0.1 release, their main goal has been to enhance stability and make it more production-ready.

This involved rearchitecting for improved versioning and overall stability. they have engaged with the community to understand what aspects of LangChain are most valued, and I will highlight their efforts towards these core areas in this video.

Each part of this Post will cover Integrations, observability, Streaming, Composability, Output Parsing, Retrieval and Agents accompanied by hands-on code execution to improve your learning experience. Stay tuned and enjoy the journey with me!

Before we start! 🦸🏻♀️

If you like this topic and you want to support me:

- Clap my article 50 times; that will really help me out.👏

- Follow me on Medium and subscribe to get my latest article🫶

- if you are not a medium member and you would like unlimited articles to the platform. Consider using my referral link right here to sign up — it’s less than the price of a fancy coffee, only $5 a month! Dive in, the knowledge is fine!

What is Observability?

Observability is crucial when you’re creating complex applications using language models. Whether you’re working with long sequences of chains or more interactive behaviours, it’s essential to know exactly what’s happening inside your application. This understanding is vital for making improvements.

In LangChain, we’ve focused on providing the best observability. This means you can easily see the inputs and outputs of the language model, as well as every step in between. they have designed LangChain with several options to achieve high-level observability.

Let’s explore a few of these options:

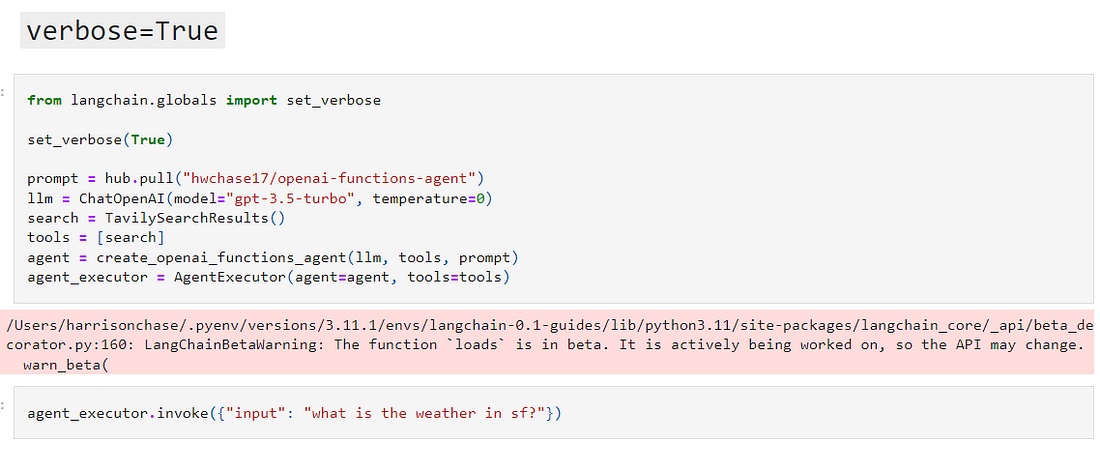

- Verbose Mode: This was one of our earliest features in LangChain. It shows the main steps happening in your application, giving a good overview without overwhelming details.

- Debug Mode: If you need more detailed information, Debug Mode is useful. It logs extensive data about every step, showing the exact prompts used, the responses, the number of tokens, etc. This mode is especially helpful for complex applications where you need to see everything in detail.

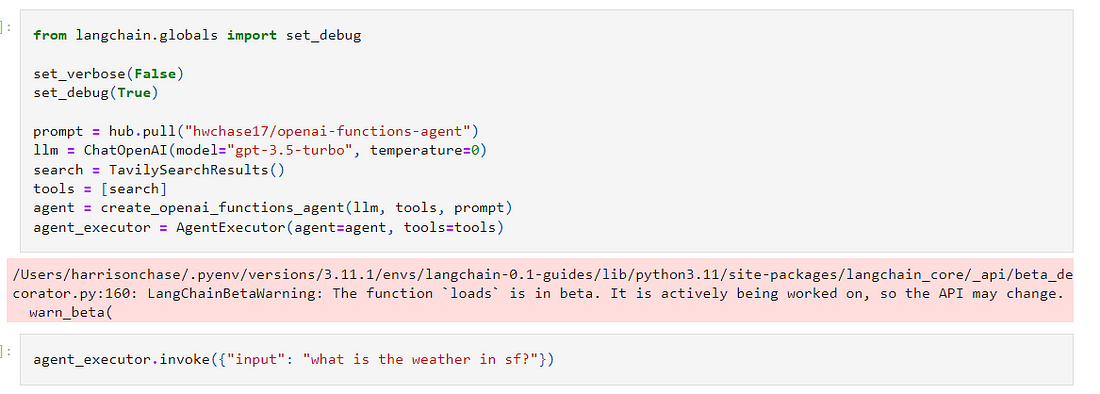

- LangSmith Tool: For a more advanced and user-friendly way to track what’s happening in your application, we’ve developed LangSmith. This tool lets you log and analyze data more effectively. You can see important events, inputs, and outputs, and play around with the data in a more intuitive interface.

So LangChain’s observability features are designed to help you understand your application thoroughly. This is essential for building effective language model applications. We hope these tools will help you in your development process!

Else See: CrewAi + Solor/Hermes + Langchain + Ollama = Super Ai Agent

What are Integrations?

Let’s explore its vast array of integrations and how it simplifies the process of creating complex language applications.

Integrations Overview:

Langchain’s integrations are organized in two ways: by providers and by components. This helps users quickly find what they need, whether for specific provider tools or component-based solutions.”

Provider-Based Integrations:

For instance, if we look at OpenAI, Langchain integrates with a variety of tools — from language models to document loaders. If you have access to OpenAI’s services, you can easily incorporate them into your Langchain projects.”

Component-Based Integrations:

Langchain also categorizes integrations based on components like language models, chat models, document loaders, and more. Each component has detailed documentation and examples, making it easy to get started.”

Unique Features:

What sets Langchain apart are its specialized toolkits and the ability to handle various document types. It’s not just about accessing language models but also about transforming and storing data efficiently.

New Integrations and Community Input:

The Langchain community is constantly adding new integrations. There’s even a dedicated page on langchain.com to showcase new and trending integrations, where users can share their favourites.

Updates and Improvements:

Langchain has recently revamped its integration process. Integrations are now separate from the main package, allowing for more stable core code and better version control. This makes Langchain more robust and production-ready.

What is Composability?

The goal of making LangChain more composable is simple: to allow easy modification and customization of chains. Users often need to adjust not just prompts, but also data processing and the order of operations. Composability makes these tasks straightforward.

What is LangChain Expression Language:

This new language is an orchestration framework, designed to simplify and enhance the way how we create and modify language model applications. It brings a lot of benefits over traditional coding methods.

Key Features of LangChain Expression Language:

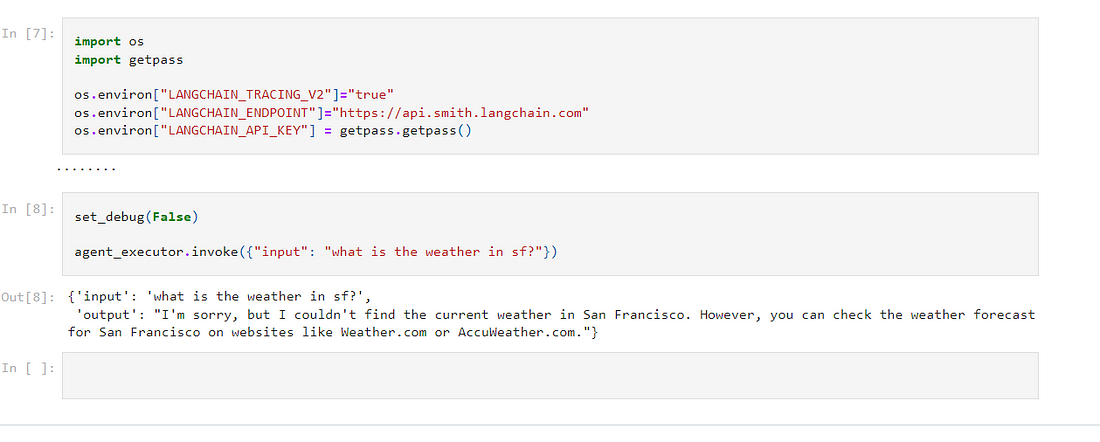

- Streaming Support: Essential for real-time processing in building LLM applications.

- Async and Batch Support: Allows applications to run in different modes without rewriting code.

- Parallel Execution: Speeds up the process by handling multiple operations simultaneously.

- Retries and Fallbacks: Offers robustness against failures and unexpected outputs.

- Access to Intermediate Results: Facilitates easy class debugging experience and user interaction.

- Observability: Automatically logs all steps for easy tracking and analysis.

Ease of Use:

LangChain Expression Language is designed to be user-friendly. It offers a common interface for all objects, making interactions seamless. Plus, there are numerous guides and resources available for beginners.”

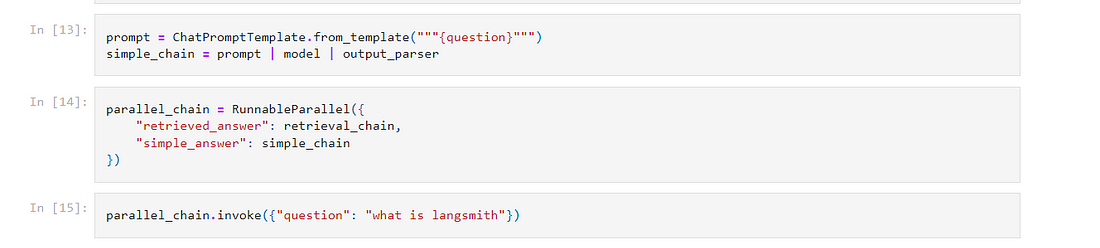

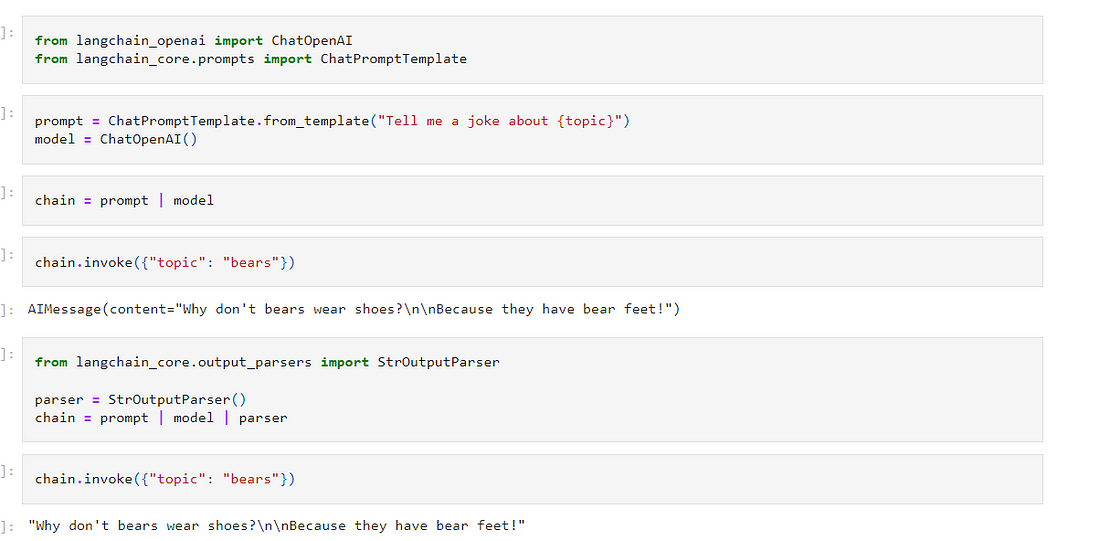

Practical Applications:

We’ll walk through a simple example using LangChain Expression Language. Imagine creating a chain that includes a language model and an output parser. With just a few lines of code, you can invoke this chain to get instant results.

Advanced Applications:

LangChain Expression Language simplifies the process for more complex tasks, like retrieval-augmented generation (RAG). You can easily add information sequentially to a dictionary and pass it along different steps, enhancing the final output.

What is streaming

In generative AI, streaming refers to the process where responses from AI models are delivered in real-time, as they are generated. This is vital because responses from language models can take time, and streaming ensures users know the system is actively working.

Why is Streaming Important?

- User Experience: Keeps users engaged by showing progress in real time.

- Complex Operations: Essential for applications involving multiple AI calls, like chains or agents, where each step can take time.

Lang Chain 0.1.0 and Streaming

Lang Chain 0.1 has been developed with a strong emphasis on streaming capabilities. Thanks to the Lang Chain Expression Language, objects created for AI applications now support various streaming methods.

Key Streaming Methods in LangChain:

- Stream Method: Delivers tokens as they’re generated.

- Async Stream Method: For asynchronous environments.

- Intermediate Step Streaming: Useful for complex any type of chains to show intermediate processes.

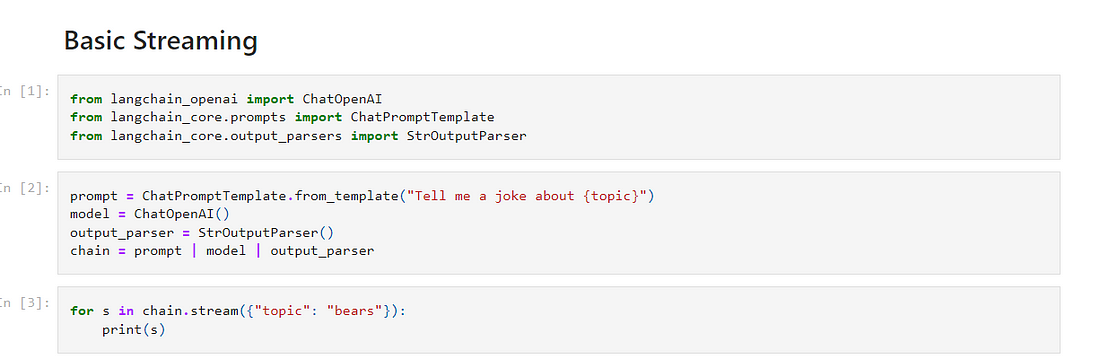

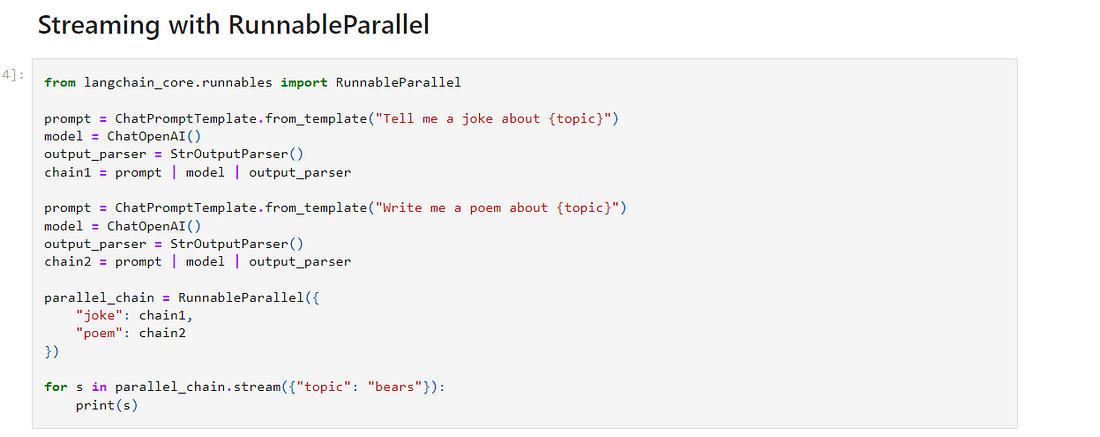

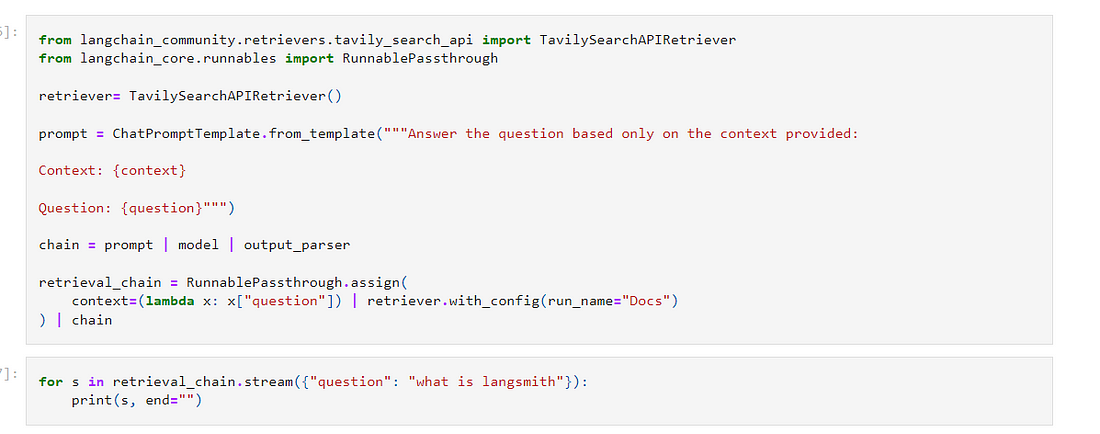

Demonstration with a Notebook:

- Basic Streaming: We create a simple chain (prompt, model, output parser) and demonstrate real-time streaming of responses.

- Parallel Streaming: Showcasing streaming with parallel chains, like telling a joke and writing a poem simultaneously. This illustrates how responses from different tasks intermingle in real time.

- Stream Log Method: Useful for revealing intermediate steps in complex processes, especially in retrieval-augmented generation (RAG). This allows users to see the documents fetched as part of the AI’s decision-making process.

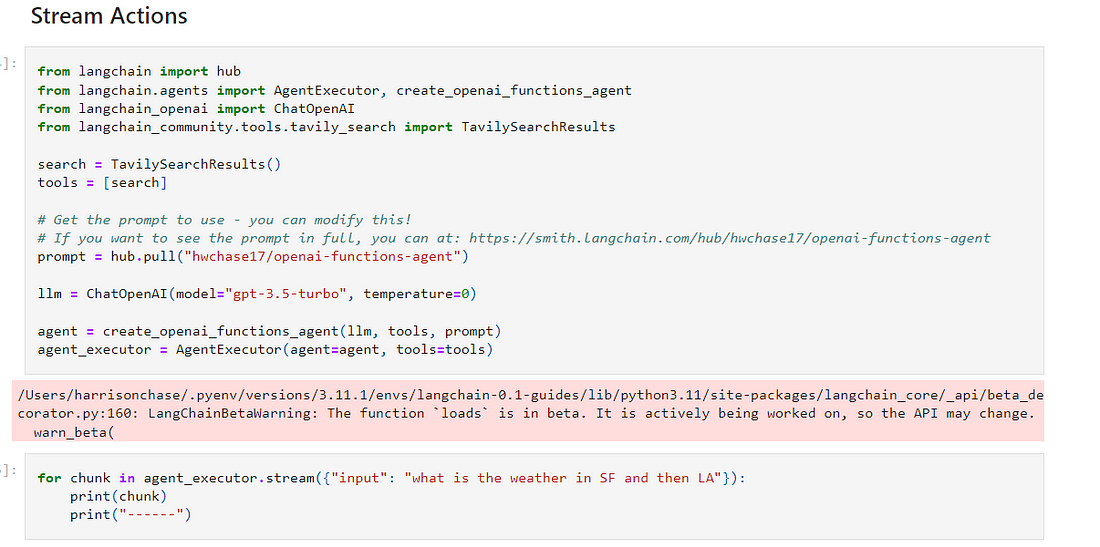

Streaming with Agents:

Agents in LangChain can perform multiple actions, and it’s important to stream these actions to the user. The agent executor in LangChain streams actions taken, not just the final tokens, providing a clearer view of the process.

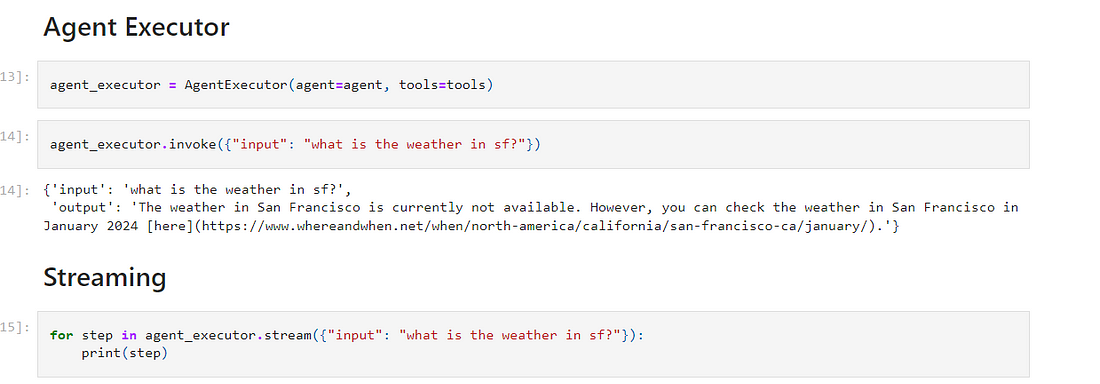

Practical Example with Agents:

- We demonstrate an agent performing a weather query. Streaming shows each action taken by the agent, like fetching weather data for different cities, and the subsequent responses from the language model.

Closing Thoughts:

Streaming in LangChain brings a dynamic and interactive element to AI applications, enhancing user experience significantly. Whether it’s a simple query or a complex series of actions, streaming ensures transparency and engagement.

What is Output Parsing?

Output parsing is the process of transforming the output from a language model — typically a string or chat message — into a format that’s more useful for downstream applications. It’s a critical step in making the output of language models actionable and versatile.”

New Developments in Output Parsing:

- Comprehensive Table of Output Parsers: LangChain 0.1 introduces a detailed table listing various output parsers, their attributes, and best use cases, helping users choose the right one for their needs.

- Emphasis on Streaming: Recognizing the challenges of streaming in output parsing, LangChain 0.1.0 focuses on ensuring that parsers can handle streaming data efficiently, even when dealing with complex formats like JSON or XML.

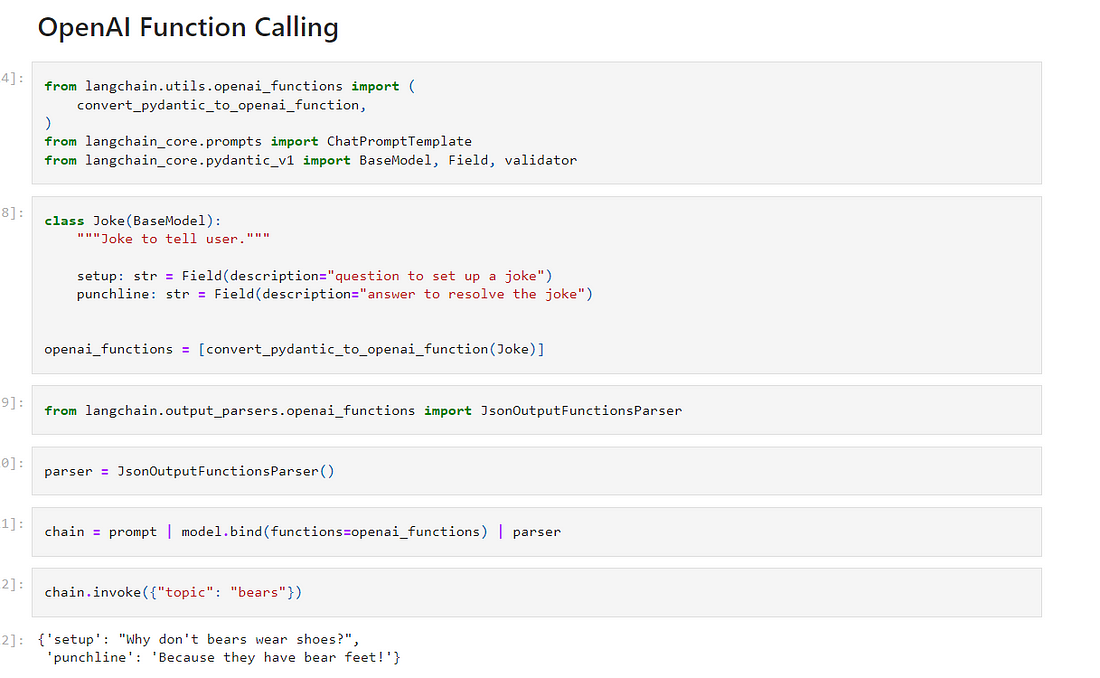

Practical Examples:

- Simple Output Parser: We demonstrate a basic parser that converts a chat message into a string, making it more usable for non-chat applications.

- OpenAI Function Calling: Exploring the use of function calling, a feature that returns outputs in a structured format, we show how LangChain 0.1 simplifies working with these structured outputs using output parsers.

Developer Experience:

LangChain 0.1 focuses on a smooth developer experience. One of the highlights is using Pydantic to define schemas easily, which is crucial for function calling. Pydantic allows for the creation of JSON schemas through intuitive Python classes, enhancing the ease of use and efficiency.

Streaming in Output Parsing:

We illustrate how LangChain 0.1 handles streaming in output parsing, a critical feature for real-time applications. This includes streaming responses for partially completed JSON strings, demonstrating the robustness of the parsers.

What is Retrieval-based applications?

Retrieval-based applications are a popular choice in LangChain. They involve fetching context to pass to language models, allowing them to respond more accurately. This approach is especially useful for incorporating current event data or private data into responses.”

Why Retrieval-Based Applications?

These applications are key for integrating language models with unique, real-time, or specific data sources that the model was not originally trained on.

Key Components of Retrieval in LangChain:

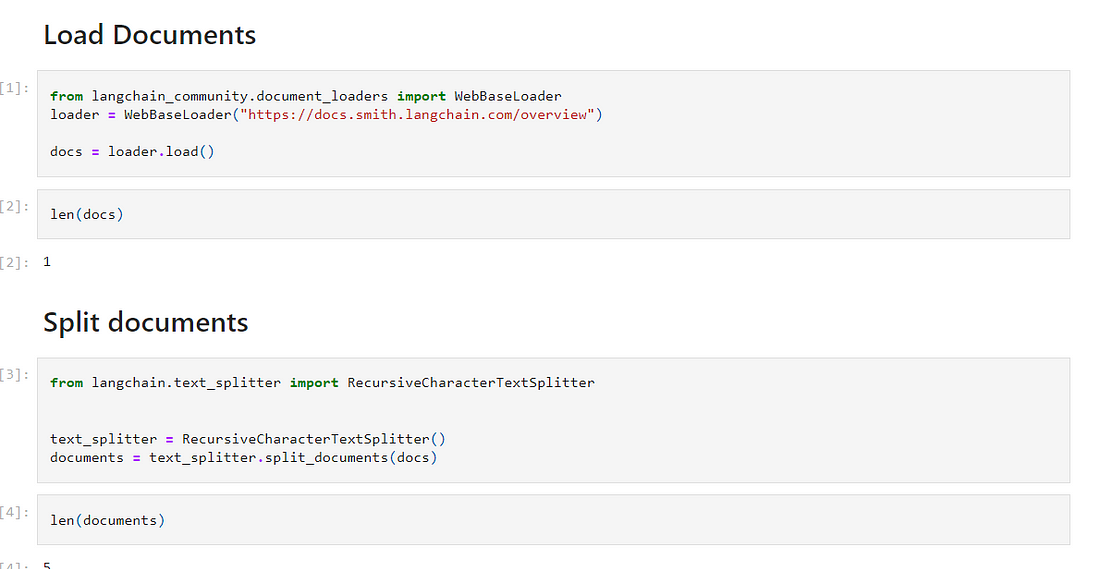

- Document Loaders: The first step involves loading data from various sources like Slack, Notion, files, or S3. LangChain offers over 100 different document loaders for this purpose.

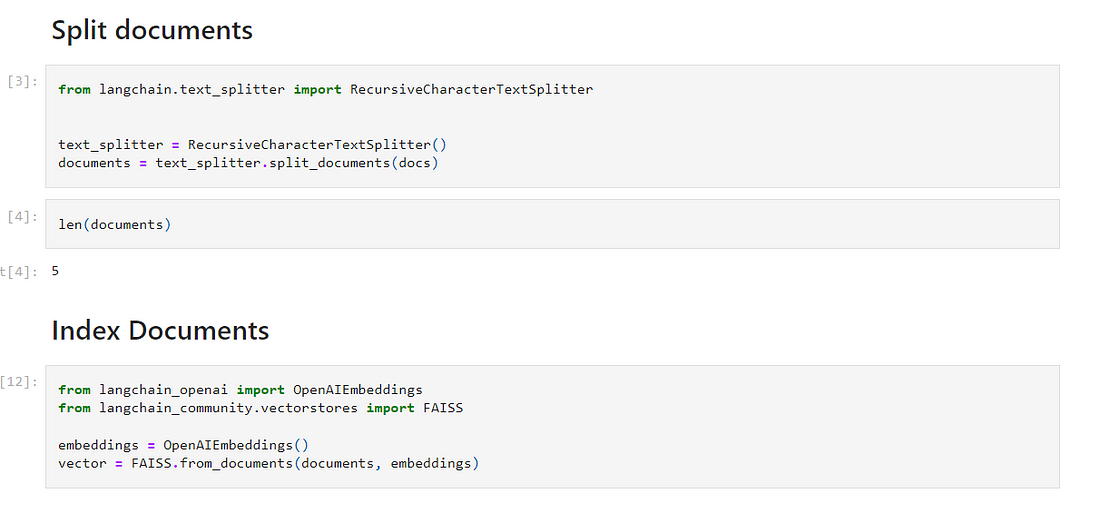

- Text Splitting: Next is splitting large documents into smaller, more manageable chunks. This can be done in various ways depending on the document type, with over 15 text splitters available.

- Indexing and Embeddings: Once split, these chunks are indexed. This process includes creating embeddings for each chunk using one of many embedding models, followed by storing these in a vector store.

- Advanced Retrieval Methods: After indexing, the next step is retrieval. LangChain provides multiple methods for this, including basic similarity searches and more complex techniques like multi-query retrievers.

Building an End-to-End Retrieval Application:

We’ll walk through a practical example using LangChain. We start by loading and splitting documentation about LangSmith. Then, we index these documents using OpenAI embeddings and an in-memory vector store. Next, we retrieve documents using a query and pass them to a language model for the final response.

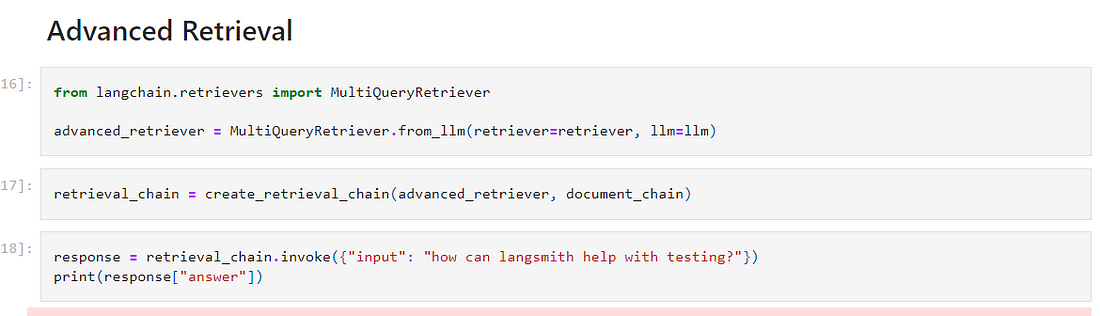

Showcasing Advanced Retrieval:

We also demonstrate an advanced retrieval method using a multi-query retriever. This approach generates multiple queries, retrieves documents for each, and combines them for the final language model input, ideal for complex queries.

Resources and Customization:

LangChain is all about flexibility and depth in retrieval applications. Whether you’re building a basic retrieval system or a complex multi-tenant application, LangChain has the resources and modular components to support your needs.

Closing Thoughts:

Retrieval-based applications with LangChain open up a world of possibilities in AI-driven data processing and responses. With its vast array of tools and methods, Lang Chain is perfect for anyone looking to integrate language models with personalized or real-time data.

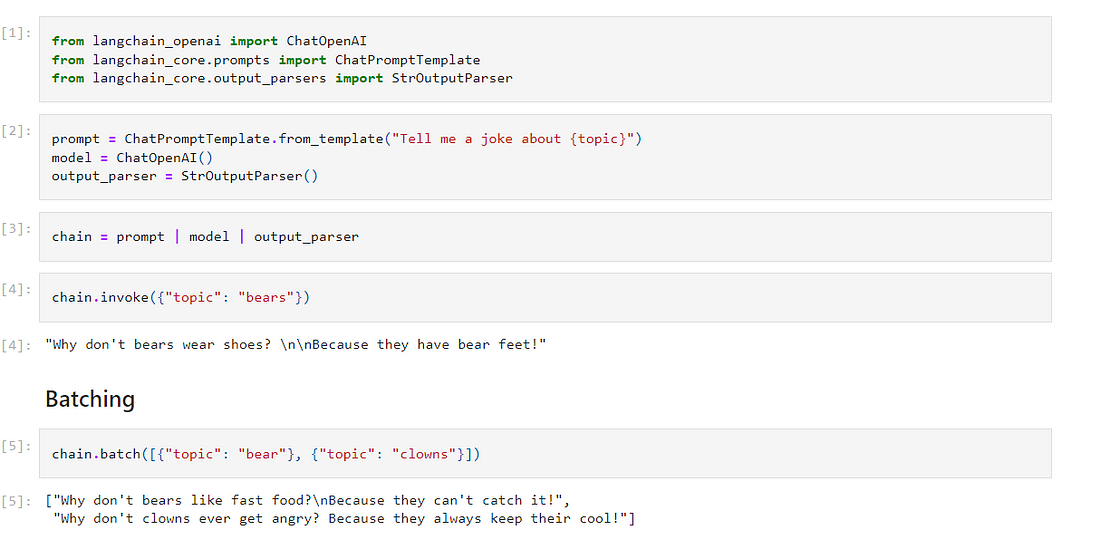

What is Agent in 0.1?

Agents in LangChain are about enhancing interaction with language models. They’re designed to reason about actions, take these actions, and often work in a loop until a task is completed. Agents are key in automating complex tasks and decision-making processes.

Key Improvements in LangChain 0.1:

- Ease of Understanding: LangChain 0.1 makes understanding and customizing agents simpler.

- Reliability: Enhanced to provide more reliable agent workflows.

- Conceptual Guidance: A new conceptual page has been added, detailing agents, their actions, and intermediate steps, making it easier to grasp their functionality.

Agent Components Explained:

- Agent Action: When a language model decides to take action.

- Agent Finish: When a language model completes its task.

- Intermediate Steps: The actions and observations made by the agent up to a point.

Tools and Toolkits:

These are representations of actions that a language model can take, paired with the functions to execute these actions. They form the backbone of how agents operate in LangChain.

Agent Executor:

The agent executor is like a loop mechanism. It repeatedly calls the language model, figures out the next action, executes it and keeps track of all actions until completion.

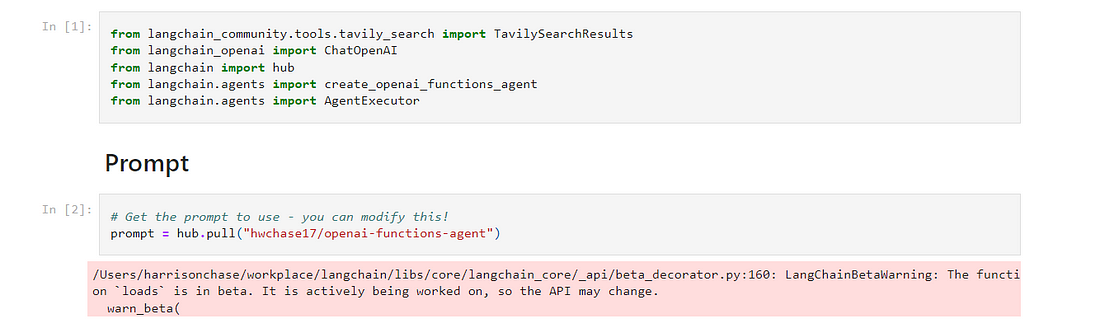

Building an Agent:

We’ll walk through a practical example. First, we import the necessary components, load the agent’s prompt, choose a language model, and select tools. Then, we combine these elements to create our agent. The agent executor is used to execute the agent’s actions and observe the results.

Streaming in Agents:

In LangChain 0.1, agents can stream steps, not just tokens. This means you can see each action taken by the agent and the results of those actions in real time, enhancing transparency and user engagement.

Resources for Building Custom Agents:

LangChain 0.1.0 provides comprehensive guides for building custom agents, offering control over inputs, formatting, prompts, and output parsing. Whether you need a basic agent or a complex one, these resources are invaluable.

Closing Thoughts:

Agents in LangChain 0.1.0 represent a significant step in making language model interactions more dynamic and practical. From automated decision-making to complex task execution, agents are a game-changer in AI workflows

ELSE SEE LangGraph: Create Your Hyper AI Agent

Conclusion :

LangChain 0.1.0 enhances user experience in building generative AI applications by improving observability, composability, streaming capabilities, and retrieval-based application workflows.

It simplifies the integration of language models with external tools, streamlines output parsing, and introduces efficient methods for data retrieval and processing.

Additionally, LangChain 0.1.0 significantly emphasises agents, making them easier to understand, customize, and reliable, thereby facilitating more complex and automated interactions with language models.

REFERENCE:

- https://python.langchain.com/docs/get_started/introduction

- https://github.com/hwchase17/langchain-0.1-guides/blob/master/streaming.ipynb

- https://github.com/hwchase17/langchain-0.1-guides/blob/master/composability.ipynb

- https://github.com/hwchase17/langchain-0.1-guides/blob/master/output_parsers.ipynb

- https://github.com/hwchase17/langchain-0.1-guides/blob/master/agents.ipynb