As technology booms, AI Agents are becoming a game-changer, quickly becoming partners in problem-solving, creativity, and innovation, and this is what makes CrewAI unique.

Can you imagine? In just a few minutes, you can turn an idea into a complete landing page, which is exactly what we achieved together with CrewAI.

CrewAI a cutting-edge alternative to AutoGEN, offering you the power to assemble teams of AI agents for automated tasks effortlessly

In this video, you’ll learn what is CrewAi, architecture design, the differences between Autogen, ChatDev, and Crew Ai, and how to use Crew Ai, Langchain, and Solar or Hermes Power by Ollama to build a super Ai Agent

What is CrewAI?

Crew AI is a cutting-edge framework designed for orchestrating role-playing, autonomous AI agents, allowing these agents to collaborate and solve complex tasks efficiently.

Key Features of CrewAi include:

- Role-based agent design: CrewAi allows you to customize artificial intelligence AI agents with specific roles, goals, and tools.

- Autonomous inter-agent delegation: Agents can autonomously delegate tasks and consult with each other, improving problem-solving efficiency.

- Flexible task management: Users can define tasks with custom tools and dynamically assign them to agents

- local model integration: CrewAI can integrate with local models through tools such as Ollama, useful for special tasks or data privacy considerations

- Process-based operations: Currently supports sequential execution of operations, with plans to support more complex processes such as consensus and hierarchical processes in the future

Else See LangGraph: Create Your Hyper AI Agent

Crew AI Vs AutoGen Vs ChatDev

AutoGen:

- While Autogen is good at creating conversational agents that work well, it lacks inherent process concepts. In Autogen, orchestrating the interactions of agents requires additional programming, which can become complex and cumbersome as the size of the task grows.

ChatDev:

- ChatDev introduced the concept of the process into the field of AI agents, but its implementation is quite rigid. Customization in ChatDev is limited and not suitable for production environments, which may hinder scalability and flexibility in real-world applications.

CrewAI :

- CrewAI is built with production in mind. It offers the flexibility of Autogen’s conversational agent and the shaping process approach of ChatDev but without rigidity. CrewAI’s processes are designed to be dynamic and powerful, seamlessly adapting to development and production workflows.

Implementation of Crew AI

1- installing Ollama

we begin by heading over to Ollama.ai and clicking on the download button.

2- Download Ollama for your Os.

you can download Ollama for Mac and Linux. Windows version is coming soon

3- Move Ollama to Applications.

Drag and drop Ollama into the Applications folder, this step is only for Mac Users. After completing this step, Ollama will be fully installed on your device. Click the next button.

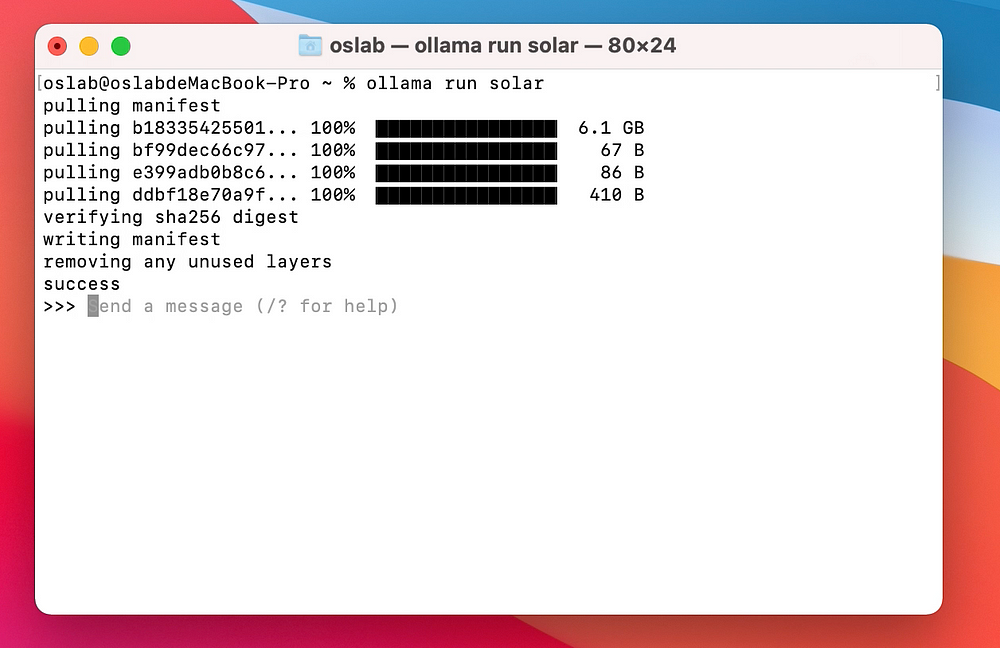

4- installing and deploying Openhermes/solar or other LLMs

Now we need to install the LLM which you want to use locally, let’s run the following command

Ollama run Solar

Ollama will now download Solar, which can take a few minutes depending on your internet speed. Once it’s installed you can start talking to it.

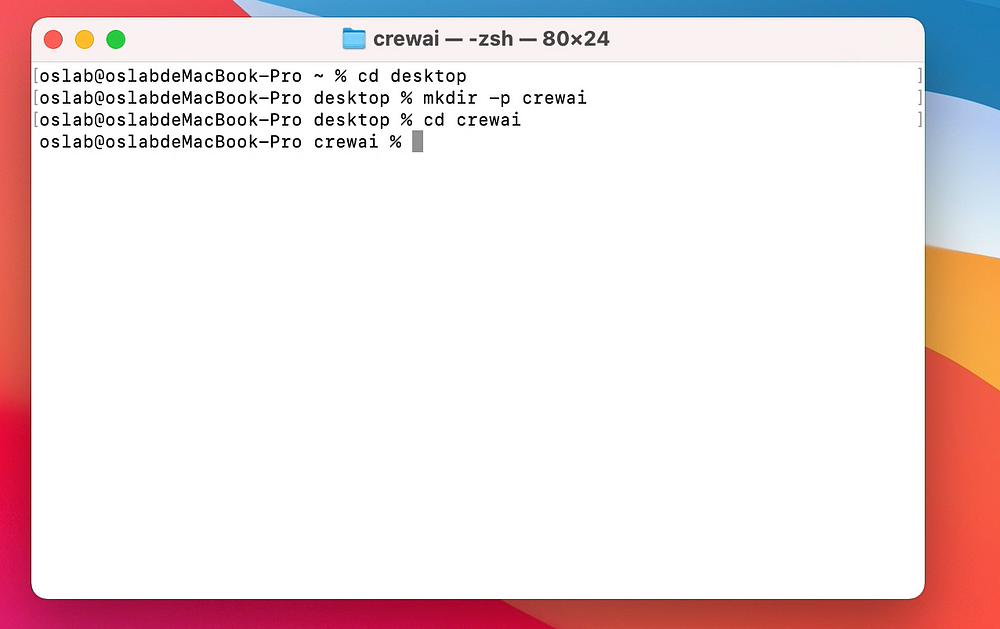

5- Create Folder

let’s create a folder with the name crewai and navigate to it.

6- Install Crewai and dependencies

we create a virtual environment with “python3.11 -m venv crew” and activate it with “source crew/bin/activate” then install “pip install crewai”

7- Installing Necessary Libraries

Before we start, let’s install the required libraries. Create a file named requirements.txt and write the dependencies below.

unstructured

langchain

Jinja2>=3.1.2

click>=7.0

duckduckgo-search

After that, just open the terminal in VS Code and run the below command.

pip install -r requirements.txt

8-Import Libraries

we are going to import Agent, Task, Crew, and Process from crewai, and then we will import DuckDuckGoSearchRun from langchain.tools to gather information from the web

DuckDuckGoSearchRun created and stored in the variable search_tool

we import ollama from langchain. llms to load a model we going to use Solar and Openhermes for our experiment

from crewai import Agent, Task, Crew, Process

import os

from langchain.tools import DuckDuckGoSearchRun

search_tool = DuckDuckGoSearchRun()

from langchain.llms import Ollama

ollama_openhermes = Ollama(model="openhermes")

ollama_solar = Ollama(model="Solar")

9- Researcher

let’s create an Agent to perform tasks, make decisions and communicate with other agents

- Role: indicating its primary function is to conduct research.

- goal: The goal of the researcher is to find methods to grow a specified YouTube channel and increase its subscriber count.

- backstory: assist in research activities and simplify certain tasks.

- tools: A list of tools available to the agent includes search_tool,

- verbose: This is set to True, which generally means the agent will provide detailed logs, outputs, or explanations

- LLM: This stands for “large language model” and in this case, ollama_openhermes is passed as the model for the agent to use.

- allow_delegation: Set to False, indicating that this agent is not permitted to delegate its tasks to other agents or processes

researcher = Agent(

role='Researcher',

goal='Research methods to grow this channel Gao Dalie (高達烈) on youtube and get more subscribers',

backstory='You are an AI research assistant',

tools=[search_tool],

verbose=True,

llm=ollama_openhermes, # Ollama model passed here

allow_delegation=False

)

10- Writer

An agent who acts as a Content Strategist, whose goal is to write interesting blog posts about YouTube growth channels. it can delegate the task of writing blog posts to an agent

writer = Agent(

role='Writer',

goal='Write compelling and engaging reasons as to why someone should join Gao Dalie (高達烈) youtube channel',

backstory='You are an AI master mind capable of growing any youtube channel',

verbose=True,

llm=ollama_openhermes, # Ollama model passed here

allow_delegation=False

)

11- Task

Task1 and Task2 are assigned to a ‘researcher’ agent to investigate the ‘Gao Dalie (高達烈)’ YouTube channel and find reliable methods to grow a channel.

Task 3 is assigned to a ‘writer’ agent to compile a list of actions for ‘Gao Dalie (高達烈)’ to implement for channel growth.

task1 = Task(description='Investigate Gao Dalie (高達烈) Youtube channel', agent=researcher)

task2 = Task(description='Investigate sure fire ways to grow a channel', agent=researcher)

task3 = Task(description='Write a list of tasks Gao Dalie (高達烈) must do to grow his channel', agent=writer)

12- Crew and Process

Crew: Defines a crew with researcher and writer as members. Process.sequential: Use a process in which tasks are executed sequentially. The results of the previous task are passed on to the next task as additional content.

crew.kickoff(): Command the crew to start work.

crew = Crew(

agents=[researcher, writer],

tasks = [task1,task2,task3],

verbose=2,

process=Process.sequential

)

result = crew.kickoff()

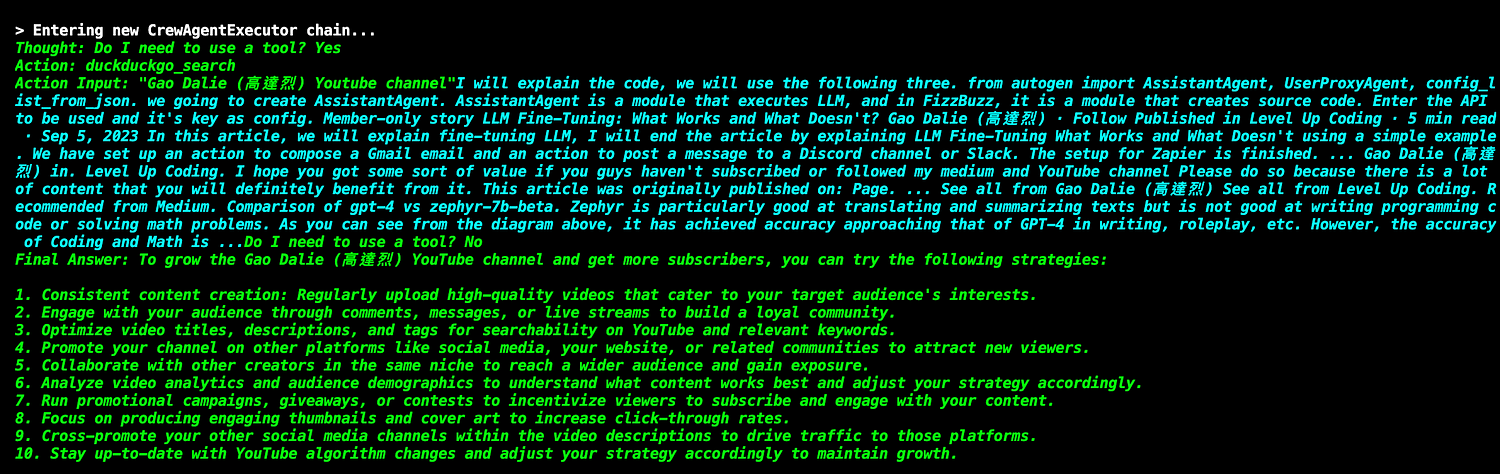

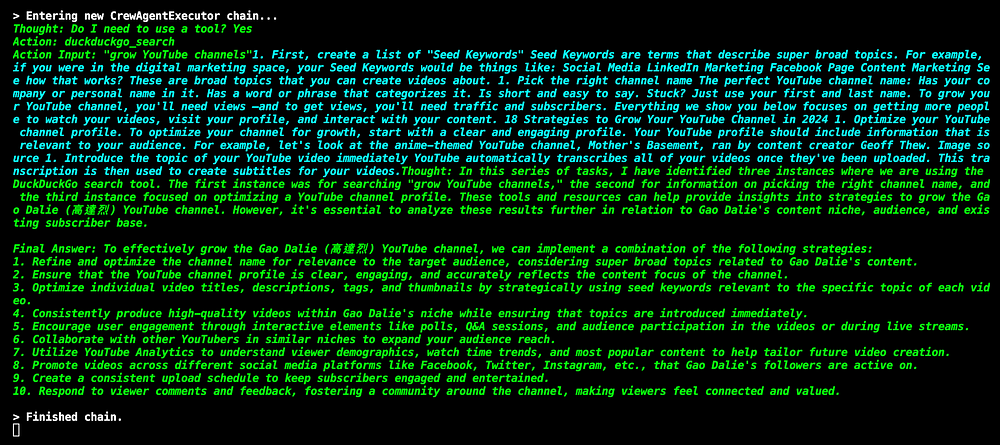

13- Output

Output results when the crew completes the work. The code outputs a simple dividing line and outputs the crew’s work results.

Openherms

– here is the result of Openhermes as a writer and Solar as a researcher

Solar

This time Openhermes will take on the role of a researcher and Solor will take the role of a writer, this change in roles will showcase how different models adapt to different creative processes

Else See: Llmlingua + LlamaIndex + RAG = Cheaper Chatbot

Conclusion :

CrewAI is not only an effective tool to solve the problem of artificial intelligence collaboration but also reshapes the relationship between humans and AI.

It will be AI The assistant’s capabilities are brought to their fullest, promoting the widespread application of AI in all walks of life. As CrewAI technology matures, AI will become an important force in enterprise collaborative work.

I look forward to seeing more solutions for efficiently managing tasks through various tools like CrewAI in the future. I will be back with more useful information next time. thank you

For linux,

This doesn’t work: ollama run Solar (base)

pulling manifest

Error: pull model manifest: file does not exist

This does:ollama run solar

.

thank you for reaching out , yes it worked with ollama but you should install ollama in your local machine only worked on Mac no windows

It worked flawlessly.

Man, you are awesome. I will buy you coffee, buddy.

Best

Thank you so much, if you are interested in AI Space Please consider subscribe my channel.

GaoDalie_AI : https://www.youtube.com/@GaoDalie_AI/videos