Langchain Embeddings transform words into numerical arrays or vectors. These vectors capture the relationship patterns between words. The set of numbers in these vectors serves as a multi-dimensional reference to measure similarity.

What is Langchain Embeddings?

Langchain Embeddings refers to words that have been transformed into numerical arrays or vectors. These vectors capture the relationship patterns between words. The set of numbers in these vectors serves as a multi-dimensional reference to measure similarity.

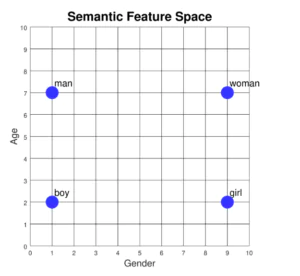

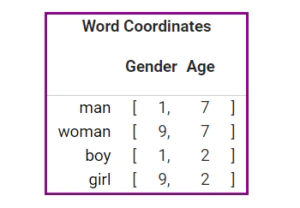

For a simple example, let me describe the words “man”, “woman”, “boy”, and “girl”. Two of these words relate to males, and two to females. Similarly, two indicate adults, while the other two suggest children. We can visualize these words on a chart where the x-axis denotes gender, and the y-axis signifies age.

Semantic features, such as gender and age, capture some of the meaning behind each word. By linking a numerical value to each feature, we can give each word its own set of coordinates.

The Langchain Embedding class is designed as an interface for embedding providers like OpenAI, HuggingFace, Cohere, and more.

There are two methods in langchian embedding: embed_query() and embed_documents(). The document function can handle multiple texts, while the query function works with a single text. This distinction exists because some text embedding model providers handle documents and search queries differently.

Langchain Embeddings_1: Embed_query()

In our initial example, we input one text string and get its OpenAI text embedding. The process starts by setting up the necessary libraries.

Let’s get started.

We’ll set up a Python virtual environment and then install the required dependencies within it.

mkdir myproject cd myproject # or python3, python3.9, etc depending on your setup python -m venv env source env/bin/activate

After setting that up, we can proceed to install our dependencies. For this tutorial, we require only LangChain and OpenAI.

OpenAI has developed a new model called text-embedding-ada-002. This model is faster and cheaper compared to the previous generation embedding models. People use it as a replacement for the slow and expensive first-generation models. Additionally, we’ll use python-dotenv to load the OpenAI API keys into our environment.

pip install openai langchain python-dotenv

LangChain is a sizable library, so its download might take some time. During the download process, please create a new file named .env and enter your API key into it. Here’s how you can do it:

OPENAI_API_KEY=Your-api-key-here

Now that we’re all set, let’s start!

from langchain.embeddings.openai import OpenAIEmbeddings load_dotenv()

To use OpenAI embeddings, we’ll import the OpenAIEmbeddings class from the “langchain.embeddings.openai” package. Next, we’ll configure the API key as an environment variable.

You can acquire this secret API key, vital for accessing various OpenAI models, from the OpenAI platform. Just sign up, and you can find this key in the “view secret key” section of your profile. You can use this same key across different projects for a particular client.

This line fetches environment variables from a .env file. When you don’t want to embed API keys or sensitive data directly into your code, this approach is beneficial.

model = OpenAIEmbeddings() input_text = "More ideas on My Pages" outcome = model.embed_query(input_text) print(outcome) print(len(outcome))

In the provided code, we’re working with the OpenAIEmbeddings class. First, we make an instance of the class and save it in the “model” variable. We aim to convert the text “More ideas on My Pages” into a numerical embedding. We pass this text to the “embed_query” method of our model.

The system saves the embedding under the variable “outcome”. To view the embedding, we should print it out. Furthermore, to ascertain its dimensions, it is advisable to print the length of the “outcome”. Essentially, this code demonstrates how to convert text into a numerical form using the OpenAIEmbeddings class.

Finally, to display the result, we use Python’s print() function. All we need to do is provide it with the variable containing the information we want to showcase. We use this function twice: first, to display the decimal numbers and then to show their count with the len() function.

Below is a snapshot showing the array of decimal values and their respective length:

[-0.02140738449471774, 0.007983417276065918, 0.002181476920456058, -0.03561326872912931, -0.02399624705633922, 0.020434919810897255, -0.02448247939824946, -0.021381102571576993, -0.0011515176193821316, -0.011925845445564819, 0.0024147373702333988, 0.0008993664552707672, -0.011518460735721976, 0.0009428974038718204, 0.007543179230974563, 0.005588391813849369]

Langchain Embeddings_1: embed_documents()

Beyond embeddings for a single text, we can also derive them for multiple strings, as shown in this example.

To set up the required environment for our demonstration, let’s first install Python’s tiktoken library:

$ pip install tiktoken

The tiktoken package helps break down text into smaller pieces called tokens using a method called Byte Pair Encoding. It’s often used with OpenAI models.

Sometimes, the text we give is too long for a specific OpenAI model. In such cases, tiktoken helps by dividing the text document into these tokens. Given this understanding, we shall proceed to the primary project.

from langchain.embeddings.openai import OpenAIEmbeddings

model = OpenAIEmbeddings(openai_api_key="sk-YOUR_API_KEY”

strings = [

"Hi there!",

"Oh, hello!",

"What's your name?",

"My friends call me World",

"Hello World!"

]

result = model.embed_documents(strings)

print(result)

print(len(result))

We take the OpenAIEmbeddings class from the “langchain.embeddings.openai” package. Unlike before, where we stored the API key in an environment variable, now we provide it directly when instantiating the class. Thus, there’s no need to use the “load_dotenv()” module in this case.

Once we call the OpenAI model, named OpenAIEmbeddings, we give it the secret API key. Subsequently, we enumerate our text strings. In the variable named “strings”, we have four phrases: “Hi there”, “Oh , hello”, “What’s your name?” and “My Friends call me world.” “Hello World!”

You can list multiple strings by placing a comma between each one. In our prior example, we used the embed_text() method, but that’s meant for just one string. For multiple strings, we use the embed_document() method. We call this method using the chosen OpenAI model and our text strings as inputs.

The system saves the results in the “result” variable. To show these results, we use the Python print() function, providing it with “result”. Additionally, to understand the size of the results, we print their length using the len() function.

You can view the output in the subsequent image:

[[-0.020247761347398342, -0.006958189484755433, -0.022615697444032124, -0.026338551437069965, -0.0370512496381446, 0.021438060526636025, -0.0060844584930895415, -0.009174172851354757, 0.008515709158560267, -0.016803488290746262, 0.026566481463444237, -0.007287421331152679, -0.013663123488130851, -0.024033928512289245, 0.0064073592085659966, -0.020057819969193976, 0.024578426260574018, -0.01467614448232833, 0.016461594182507436, -0.016790826028904682, -0.007186119045468414, -0.008072512298975886, 0.004871998958645219, -0.001625582474417045, -0.014701469937334073, -0.0059863222385269635, -0.0020545337073530345, -0.023058895234939092, 0.019715923998309983, -0.03122637822301716, 0.012764066575797924, 0.011314180784259437, -0.008427069600378874, -0.009630032904103303, -0.0017553757805674477, -0.027250269679921887, -0.008376418690367388, 0.0021779956497520016, 0.0235780684595407, -0.008756301446776119, 0.023388127081336335, 0.0008737307588352458, 0.009731334724126276, -0.014043006244539582, -0.017449289721699172, 0.010839326407425938,]]

Conclusion :

This article explored the idea of embedding in LangChain. We delved into understanding what embedding means and its functioning. Here, we’ve showcased how to embed text strings through practical examples. We demonstrated how to get embeddings for both a single text and multiple texts using OpenAI.

More Ideas On My Website