As LLms Boom, The model size of LLM increases according to a scaling law to improve performance, and recent LLMs have billions or tens of billions of parameters or more. Therefore, running LLM requires a high-performance GPU with a large amount of memory, which is extremely costly.

When operating LLM, inference speed is an important indicator of service quality and operational costs.

This video will teach you about VLLM, flash attention, and Torch.compile. You’ll discover how to implement VLLM, Flash Attention, and Torch.compile, and why VLLM is much better than Torch.compile and Flash_Attention.

Before we start! 🦸🏻♀️

If you like this topic and you want to support me:

- Clap my article 50 times; that will really help me out.👏

- Follow me on Medium and subscribe to get my latest article🫶

- What content do you want to see me sharing? get started

- Buy me a Coffee to create more high-quality content 🙏

What is Torch.compile?

torch.compile is intended to provide you with most of the speedups PyTorch 2.0 offers.

torch.compile compiles PyTorch code into an optimized kernel through JIT, making PyTorch code run faster. Most of the process only requires modifying one line of code.

How To Use Torch.compile ?

model = AutoModelForCausalLM.from_pretrained(

model_name,

device_map="cuda",

trust_remote_code=True,

torch_dtype=torch.bfloat16,

load_in_8bit=False,

load_in_4bit=False,

use_cache=False,

).eval()

model = torch.compile(model)

What is FlashAttention?

FlashAttention significantly improves the efficiency and speed of training Transformers compared to existing attention mechanisms.

Additionally, FlashAttention can be extended to block-sparse attention, further enhancing its performance and scalability on long sequences.

Feature of FlashAttention

Feature FlashAttention is an optimized attention mechanism that enhances the efficiency and speed of training Transformer models by reducing memory accesses and improving memory utilization. It achieves this by employing tiling techniques to operate on blocks of the attention matrix, avoiding the need to store the entire matrix during the forward pass and recomputing it during the backward pass.

Else See LangGraph: Create Your Hyper AI Agent

How To Use FlashAttention?

we use Scaled Dot-Product Attention(SDPA) is a key component in Transformer models that calculates the attention scores between query, key, and value vectors. In SDPA, the dot products of the query vector with the key vectors are scaled by the square root of the dimension of the key vectors to prevent the gradients from vanishing or exploding.

- I would expect speed improvements of 2–3x and memory efficiency increases of 10–20x only when SDPA is applied.

I define a function infer_flash_compile to perform inference using a given model and tokenizer, the function encodes the input prompt using the tokenizer.encode method, storing the encoded tokens in the variable token_ids. It then sets up a context manager for utilizing the flash_cuda library to enhance computation efficiency, with specific optimizations configured through parameters like enable_flash=True and enable_mem_efficient=False.

within a torch.no_grad() block, the function generates an output sequence using the model’s generate method. This method accepts the encoded token_ids as input and specifies various generation parameters such as max_new_tokens, temperature, top_p, top_k, and repetition_penalty.

Finally, the function decodes the generated output sequence IDs back into text using the tokenizer.decode method, excluding special tokens, and returns the decoded text as the output of the function.

def infer_flash_compile(model, tokenizer):

model.to_bettertransformer()

token_ids = tokenizer.encode(

self.prompt,

add_special_tokens=False,

return_tensors="pt"

)

with torch.backends.cuda.sdp_kernel(

enable_flash=True,

enable_math=False,

enable_mem_efficient=False

):

with torch.no_grad():

output_ids = model.generate(

token_ids.to(model.device),

max_new_tokens=256,

temperature=0.8,

top_p=0.95,

top_k=50,

repetition_penalty=1.10,

do_sample=True,

pad_token_id=tokenizer.pad_token_id,

eos_token_id=tokenizer.eos_token_id,

)

return tokenizer.decode(

output_ids.tolist()[0][token_ids.size(1):],

skip_special_tokens=True,

)

Combine FlashAttention and Torch.compile

here how I combine FlashAttention and Torch. Compile

def infer_flash_compile(model, tokenizer):

model.to_bettertransformer()

model = torch.compile(model)

token_ids = tokenizer.encode(

self.prompt,

add_special_tokens=False,

return_tensors="pt"

)

#rest of the code

FlashAttention_2v Vs FalshAttention

FlashAttention-2 improves upon FlashAttention in terms of memory saving and runtime speedup by optimizing work partitioning and reducing non-matmul FLOPs.

FlashAttention-2 achieves around 2× speedup over FlashAttention, reaching up to 73% of the theoretical max throughput in the forward pass and up to 63% in the backward pass

Additionally, FlashAttention-2 yields significant speedup compared to FlashAttention in various settings, such as with or without a causal mask and different head dimensions, resulting in up to 225 TFLOPs/s per A100 GPU during end-to-end training of GPT-style models.

Else See : LangGraph + Gemini Pro + Custom Tool + Streamlit = Multi-Agent Application Development

What is VLLM?

vLLM stands for “virtual Large Language Model.” It is an LLM (Large Language Model) serving system that incorporates the PagedAttention algorithm to efficiently manage memory usage for serving large language models.

vLLM aims to reduce memory waste and improve throughput by dynamically assigning physical blocks to logical blocks and optimizing the storage and access of key-value cache data. It is designed to enhance the performance of LLM serving systems by maximizing hardware utilization and minimizing latency

How To Use VLLM?

Install the vllm, so we are going go to a terminal and type Pip to install vllm if you are on Mac or Linux, you can try pip3 to install vllm

pip install vllm

Once installed we import LLM, SamplingParams and destroy_model_parallel

we initialized with parameters such as the model and tokenizer names or paths, along with the data type for internal processing (set to ‘float16’).

Sampling parameters like temperature, top_p, and top_k are defined using the SamplingParams class to control the randomness and selection of tokens during text generation. Finally, text generation is performed using the initialized model and sampling parameters, taking the input prompt into account.

from vllm import LLM, SamplingParams

from vllm.model_executor.parallel_utils.parallel_state

import destroy_model_parallel

model = LLM(

model=model_name,

tokenizer=tokenizer_name,

dtype='float16'

)

sampling_params = SamplingParams(

temperature=0.8,

top_p=0.95,

top_k=50

)

outputs = model.generate(

self.prompt,

sampling_params

)

Experimental setup

- we need to generate response sentences 100 times for each model and speed-up method, measure the generation time and number of tokens generated for each response sentence, calculate the tokens per second (Number of tokens/Generation time) for each response, and compare it with the average token generation speed over 100 iterations.

- We will use the verification model and acceleration method provided, which includes an Intel® Core™ i9–10980HK CPU, an NVIDIA GeForce RTX 3080 Laptop GPU and Microsoft-phi-1.5 (1.3B)

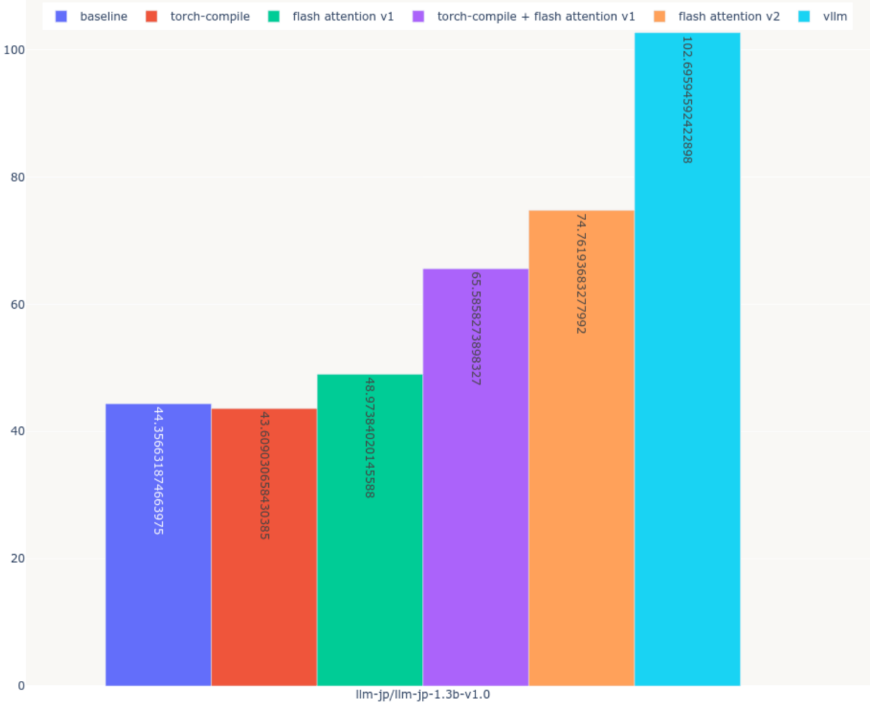

Experimental result

- Measurement time: 7984.71 seconds (approx. 1.3 hours)

Thoughts on the experiment :

Improvements in inference speed were observed for four methods, except one.

Among the five speedup methods, two, flash_attention_v2, were particularly noted for enhancing inference speed.

Out of the five speedup techniques, vllm demonstrated the most significant improvement in inference speed.

I also consider flash_attention_v2’s speed of 74 tokens/s to be sufficiently fast, while vllm achieves 102 tokens/s.

The average number of characters a human can speak per minute is approximately 300.

For instance, consider the following example sentence, which contains 319 characters.

I have strong communication skills and can earn trust by leveraging my listening abilities.

During my university days, I worked part-time at a shoe store. In customer service, communicating with customers is essential, so I made it a point to listen to their stories regularly.

Not only did I listen to customers who were looking for their ideal products, but I also sometimes asked questions to help them clarify what they were seeking. By adopting such customer service practices, I was able to receive compliments from customers, saying, 'It was good to have Mr. Ito assist me.' In your company, I aim to listen to customers and clients first, understand what they desire, and proactively make suggestions to earn trust and contribute effectively

If you tokenize the above sentence using a tokenizer with 187 tokens, you will obtain 60 seconds, indicating that humans can generate Microsoft-phi-1.5 by hand in approximately 1.83 seconds.

If we exclude the processing involved in speech synthesis, which was not tested this time, the speed will increase by a factor of 32.78 compared to humans. If the speed is not increased by 2.87 seconds, the inference speed will be improved by a factor of 1.56.

Else See : CrewAi + Solar/Hermes + Langchain + Ollama = Super Ai Agent

Conclusion :

We introduced, implemented, and compared five methods to improve the inference speed of a local LLM. This time, we evaluated a small model with 1.3B parameters, but if you have the computational resources, please consider verifying the speedup with a larger model